Four major U.S. technology companies say they are joining forces to remove terrorist-related content from their online services.

YouTube, Facebook, Twitter, and Microsoft announced on December 5 that they planned to create a shared database of the videos and images that they have removed from their sites.

The companies said the database, hosted by Facebook, will enable their peers to quickly remove similar posts on their platforms.

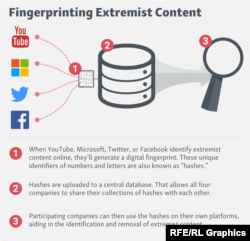

The database will store digital snapshots of images and videos known as "hashes" -- a unique digital fingerprint created for each piece of content.

Photos and videos being uploaded to the participating services will have their hash checked against the database. If it matches a hash stored there, a notification will be sent to the company to which the content has been uploaded so that it can be manually reviewed for possible removal.

The joint database is to start operating next year and more companies may be brought into the partnership.

"We hope this collaboration will lead to greater efficiency as we continue to enforce our policies to help curb the pressing global issue of terrorist content online," Facebook said in a statement.

"There is no place for content that promotes terrorism on our hosted consumer services," a spokesman for Twitter said, adding that the initiative was aimed at the "most extreme and egregious" images and videos.

Tech companies have come under pressure from Western governments to do more to remove content related to terrorist organizations and far-right groups.

The European Union's executive body has warned that the EU would introduce regulation mandating swift action against hateful and racist content if tech companies did not respond more quickly.

Social media has become a powerful recruitment tool for the Islamic State militant group, enabling it to expand its reach around the globe through posts that radicalize followers and inspire them to plan lone-wolf attacks.

A number of tech firms such as YouTube and Facebook have begun to automatically remove extremist posts.

But many providers have relied until now mainly on users to flag objectionable material, which is then individually reviewed by human editors who delete postings found in violation.

Twitter suspended 235,000 accounts between February and August and has expanded the teams reviewing extremist posts.

An international group comprising some of the main U.S. tech firms said in a report last week that governments seeking to curtail the spread of extremist content online risk jeopardizing free speech and privacy rights.

The Global Network Initiative said on November 30 that companies should not be pressured by governments to change their terms of service, and that demands to restrict content need to be consistent with existing legal frameworks.

Members of the group include Microsoft, Facebook, Google, LinkedIn, and Yahoo.